In a historic confrontation between the U.S. government and Silicon Valley, President Donald Trump has officially banned AI company Anthropic from the federal government. The February 27, 2026, directive forces all federal agencies to halt their use of the company’s technology. Simultaneously, rival firm OpenAI stepped into the void, quietly signing a classified agreement with the Pentagon.

This is not a simple vendor dispute but a constitutional and ethical battle that will define the future of artificial intelligence in America.

How It All Started: The $200 Million Contract and the Venezuela Trigger

The rift began quietly after a historic July 2025 deal. The U.S. Department of Defense awarded Anthropic a two-year, $200 million contract, making Claude the first commercial frontier AI model deployed inside the Pentagon’s classified networks.

However, the relationship fractured in January 2026 following a covert U.S. military operation in Venezuela that resulted in the capture of President Nicolás Maduro. Pentagon officials alleged that Anthropic privately questioned its partner, Palantir, about whether its AI model, Claude, was involved in the combat operation. While Anthropic denied discussing the specifics of any military mission, the inquiry severely damaged the relationship.

The Pentagon’s Ultimatum: Drop Your Red Lines or Lose Everything

Following the Venezuela incident, Defense Secretary Pete Hegseth summoned Anthropic CEO Dario Amodei to the Pentagon in early February. Hegseth delivered a blunt ultimatum: Anthropic must allow Claude to be used for “all lawful purposes” without company oversight or restrictions.

Anthropic firmly refused to lift two specific internal restrictions:

- No fully autonomous weapons systems: The company argued that current AI models are not reliable enough to make lethal decisions without human oversight.

- No mass domestic surveillance: Anthropic classified this as a violation of fundamental rights under the U.S. Constitution.

The Pentagon established a final deadline of 5:01 PM EST on Friday, February 27, 2026, to drop the restrictions.

The Ban and the Immediate Fallout

When Anthropic refused to capitulate, President Trump intervened directly. Taking to Truth Social, Trump accused the company of trying to “STRONG-ARM the Department of War” and stated their actions put American lives and national security in jeopardy.

Trump immediately issued a formal directive enforcing a federal ban, allowing a six-month phaseout period for agencies that had already integrated Claude. Minutes later, Defense Secretary Hegseth designated Anthropic a “Supply-Chain Risk to National Security”. This sweeping designation mandates that no contractor or supplier doing business with the U.S. military can conduct commercial activity with Anthropic.

Within hours of the Anthropic ban, OpenAI CEO Sam Altman announced his company had reached an agreement to deploy OpenAI models on classified military networks.

| Metric | Anthropic’s Financial Standing Prior to the Ban |

| Latest Valuation | $380 Billion (as of Feb 12, 2026) |

| Recent Funding | $30 Billion funding round closed |

| 2026 Revenue Projection | Up to $18 Billion |

| Pentagon Contract Value | $200 Million (Less than 2% of projected revenue) |

Anthropic Refused. Then Trump Intervened.

Amodei did not blink. On Thursday, February 26th, he published a detailed public statement: “We cannot in good conscience accede to their request… Anthropic understands that the Department of War, not private companies, makes military decisions. We have never raised objections to particular military operations nor attempted to limit use of our technology in an ad hoc manner.”

He insisted the two disputed uses autonomous weapons and mass surveillance that were “simply outside the bounds of what today’s technology can safely and reliably do” and that, to his knowledge, “these exceptions have not affected a single government mission to date”.

Emil Michael, Pentagon Undersecretary for Research and Engineering, responded on X by accusing Amodei of lying and harboring a “God-complex”, writing: “He wants nothing more than to try to personally control the US Military and is ok putting our nation’s safety at risk”.

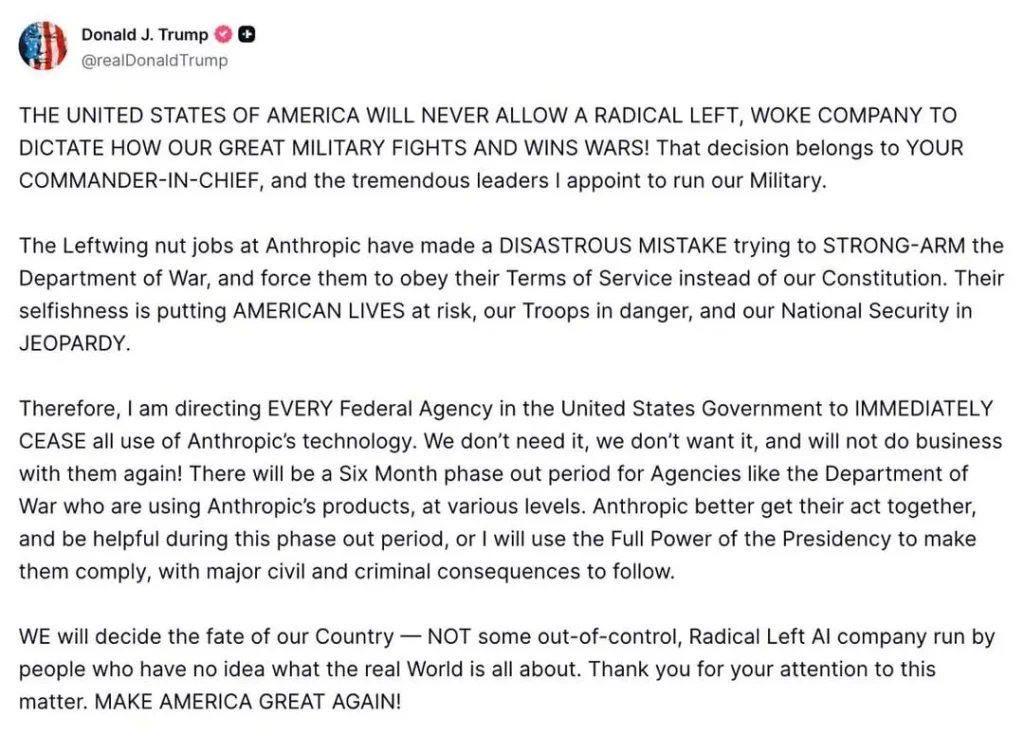

When the 5:01 PM deadline passed without capitulation, President Trump took to Truth Social with a blistering post:

“THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS… The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Their selfishness is putting AMERICAN LIVES at risk, our Troops in danger, and our National Security in JEOPARDY.”

Trump

Trump then issued the formal directive: every federal agency must immediately stop using Anthropic’s technology, with a six-month phaseout period granted to agencies — particularly the Pentagon — that had already integrated Claude deeply into active systems. He warned he would use “the Full Power of the Presidency” to enforce compliance, with “major civil and criminal consequences” threatened against any agency failing to transition.

Hegseth’s Nuclear Option: Supply-Chain Risk Designation

Minutes after Trump’s post, Defense Secretary Hegseth formalized his own blow. He officially designated Anthropic a “Supply-Chain Risk to National Security” — a classification that carries sweeping implications:

“Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.”

This means every defense contractor, supplier, and military partner — including Lockheed Martin, Raytheon, Palantir, and thousands of smaller firms — would be potentially prohibited from using Claude for any purpose. Hegseth gave Anthropic six months to continue providing transition services to the Pentagon before the final cut.

He called it a “master class in arrogance and betrayal” and declared: “America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.”

OpenAI: The Opportunistic Winner

Within hours of the Anthropic ban, OpenAI CEO Sam Altman announced that his company had reached an agreement with the Pentagon to deploy OpenAI models on classified military networks. The timing was remarkable — and almost certainly not coincidental.

What is notable is that Altman, earlier that same morning, had told CNBC that OpenAI shares Anthropic’s red lines — also opposing autonomous weapons and domestic surveillance. He wrote in an internal memo to staff that OpenAI was seeking exclusions from both those use cases in any Pentagon deal. Yet OpenAI’s framing was diplomatic rather than confrontational, enabling Altman to secure the deal while Amodei lost his.

OpenAI, Google, and Elon Musk’s xAI all hold existing Pentagon contracts and have agreed to allow their tools for any “lawful” purpose. xAI had only days earlier become the second company — after Anthropic — cleared for classified Pentagon network access.

Anthropic Pushes Back: Lawsuit and Legal Challenge

Anthropic is not surrendering. In a statement Friday evening, the company announced it would challenge the supply-chain risk designation in federal court, calling it “legally unsound” and warning it sets “a dangerous precedent for any American company that negotiates with the government”.

Crucially, Anthropic’s legal team challenged the scope of Hegseth’s directive, arguing that under federal law the supply-chain risk designation can only restrict Claude’s use within DoD contracts — it does not give the Secretary authority to force all defense contractors to sever commercial ties with Anthropic for non-DoD business. “The Secretary does not have the statutory authority to back up this statement,” the company said.

The $380 Billion Question: What Happens to the IPO?

The timing could not be more perilous for Anthropic’s business trajectory. Just two weeks before this crisis exploded, on February 12, 2026, Anthropic had closed a $30 billion funding round — co-led by D.E. Shaw Ventures, ICONIQ, and MGX by bringing its valuation to a staggering $380 billion, making it the third most valuable private company in the world behind OpenAI and SpaceX.

The company was projecting $14 billion in revenue over the next year, with internal forecasts rising to as high as $18 billion for 2026 and $55 billion by 2027. An IPO was increasingly expected in the second half of 2026.

The Pentagon contract itself worth up to $200 million — represents less than 2% of projected annual revenue. But the reputational damage, investor uncertainty, and potential domino effect on other enterprise customers could be far more significant. Amodei himself argued the company’s valuation and revenue “have only grown” since it took its stand but that was before the formal presidential ban.

Independent experts say the standoff is, in the words of Jerry McGinn, Director of the Center for the Industrial Base at CSIS (Center for Strategic and International Studies): “a very unusual, very public fight… reflective of the nature of AI”.

Why This Matters Beyond Anthropic

This crisis is not merely about one company and one contract. It establishes a sweeping and dangerous precedent:

- Can a U.S. president weaponize “national security” designations against domestic companies that refuse to comply with government demands on ethics?

- Do AI companies have the right — or the responsibility — to set hard limits on how governments use their technology, even when the government says those uses are lawful?

- What does it mean for AI safety if the only companies that get government contracts are those willing to remove all ethical guardrails?

- Will this chill future AI companies from standing up to government demands, making “safety AI” a marketing claim with no enforcement mechanism?

Also Read: Coinbase Q4 Earnings Disaster: $667M Loss & Trading Outage